Smart Glasses - Multi-Modal Product Design

A wearable device that enhances user capabilities through multimodal integration and haptic feedback to address a real-world challenge.

Goal

Design a wearable device that delivers a sense of augmented capability to users by leveraging multi-modal integration principles and haptic technologyto identify, investigate, and address a tangible real-world challenge.

Process

Conceptualized and presented a haptic-enabled road safety eyewear solution for pedestrians as part of the Multi-modal Design coursework atBentley University.

Role

Sr. UX Designer, accountable for concept generation, product strategy, user research, 3D Modeling/Printing and project documentation.

Pedestrians face an ongoing risk of vehicle-related collisions due to a range of contributing factors, including poor lighting, adverse weather conditions, absent sidewalks, and dangerous driving behaviors such as recklessness or impaired driving.

Approach: The solution centered on designing a wearable that simultaneously heightens a pedestrian's situational awareness and improves their visibility to others — referred to as 'conspicuity'. The project was anchored around three core user stories:

Storyboarding, Functional Analysis, MorphologicalChart, 3D Prototyping, Arduino Programming, Usability Testing

I led the initial phases independently — ideating, storyboarding, performing functional analysis, and completing the morphological chart. Once team members came on board, I transitioned into a coordination role, defining action items, leading usability testing sessions, and managing research paper documentation.

After defining the problem, I developed storyboards for a wearable that would heighten user alertness through haptic feedback triggered by detected vehicle movement. The intent was to give pedestrians a sharper awareness of their surroundings while notifying them of potential hazards, ultimately lowering the likelihood of accidents.

Following this, I carried out a functional analysis to map out the variables influencing user behavior and to generate hypotheses around the wearable's intended functionality.

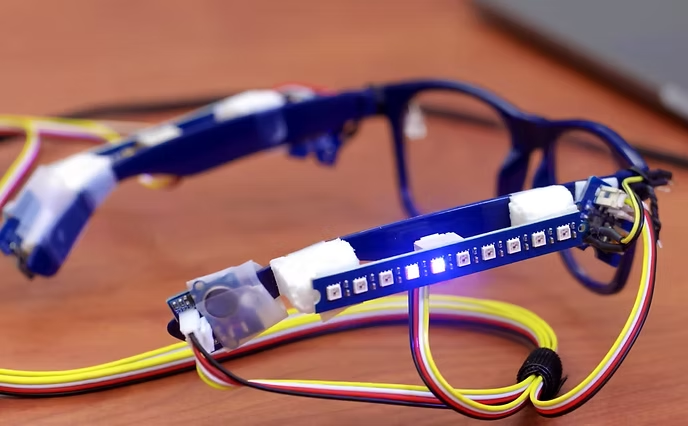

Our team then conducted human factors research with a focus on vibro tactile sensitivity in the head region, peripheral vision, and visual attention paradigms. Based on these findings, the eyewear mechanism was designed around three functional components:

Given that the LED strips were designed to run continuously, a specialized logic was implemented in the Arduino to allow simultaneous execution of the strip illumination alongside the peripheral LED and vibrationmotor triggers. This was achieved using timestamps and global variables tracking the last execution cycle of the main Arduino loop — an approach that also contributed to faster sensor and motor response times.

Early prototyping revealed sizing challenges, as the hardware modulesneeded precise fitting within the eyewear frame. Several dimensional adjustments were made before moving forward.

The initial prototype was evaluated with participants between the ages of18 and 27. Key findings included:

The vibrational alerts were evaluated against heuristics of granularity, frequency, and direction:

Assigning responsibilities aligned with each team member's strengths resulted in a smoother, more efficient workflow.Peer-reviewing every deliverable before submission proved valuable for maintaining quality standards. Submitting early drafts for review significantly elevated the quality of the final output

For future iterations, the following enhancements are planned: